In this article, you can find how to run multiple cURL commands in parallel. To process cURL in parallel or sequentially we can use:

xargsparallelfor loop in bashseq

You can find several examples below:

Let's discuss in more detail how to send concurrent requests with cURL.

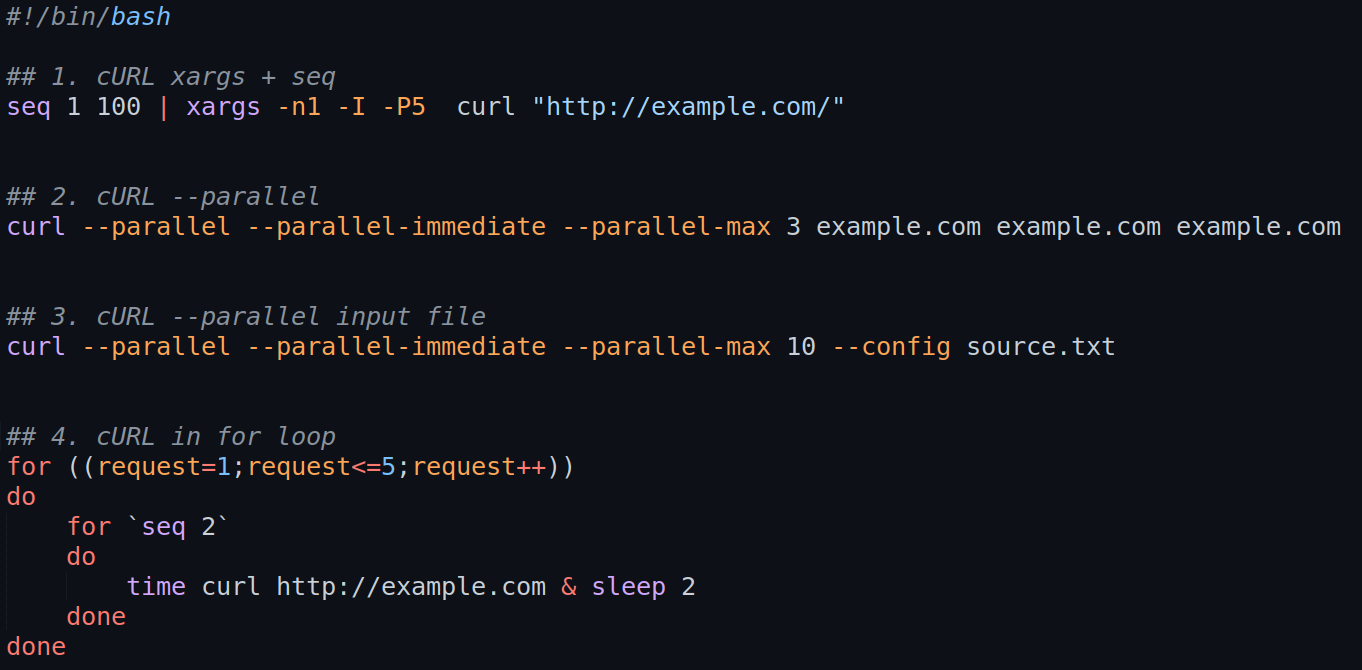

1. cURL xargs + seq

To send parallel requests with cURL and xargs with -P option:

seq 1 100 | xargs -n1 -I -P5 curl "http://example.com/"

The command above will run 100 cURL commands at 5 jobs in parallel.

2. cURL --parallel list of URLs

We can do multiple parallel requests with cURL by using option --parallel.

Do 3 parallel requests to a list of sites - given in the command:

curl --parallel --parallel-immediate --parallel-max 3 example.com example.com example.com

3. cURL in for loop

We can use a for loop to run multiple cURL commands at once. We can simulate parallel run by using two loops:

for ((request=1;request<=5;request++))- from 1 to 5for 'seq 2'- two parallel calls of cURL- both ways to generate iterations are almost the same

for ((request=1;request<=5;request++))

do

for `seq 2`

do

time curl http://example.com & sleep 2

done

done

4. cURL --parallel input file

We can use file with URLs as source to send multiple cURL requests:

curl --parallel --parallel-immediate --parallel-max 10 --config source.txt

In this case we can send 10 parallel requests against URLs from file source.txt. The file format is as follows:

url = "example.com"

url = "example.com"

url = "example.com"

5. multiple sequential cURL request + proxy

Finally we can combine all from the above. To run multiple cURL requests based on file or array of values.

We will also use a proxy to reach another site or subdomain:

http://proxy.example.com:7878- proxy site and domainproxy_pass="user-${country}-${sidnum}:pass"- user + password or other headers- we use the country as parameter and generate random number - used for session id

jq -c .- convert JSON to JSON lines>> data_$country.csv- write the JSONl file to the file

#!/bin/bash

for country in US BR FR;

do

for ((request=1;request<=100;request++))

do

for ((r=1;r<=10;r++))

do

sidnum=$(shuf -i 0-999999 -n 1);

proxy_pass="user-${country}-${sidnum}:pass";

echo $proxy_pass;

curl -x http://proxy.example.com:7878 --proxy-user $proxy_pass -L "https://example.com/" | jq -c . >> data_$country.csv & sleep 1

done

done

done

running the code above will run 1000 cURL requests per country - 10 in parallel.

We can find example result for initial values of the loops - 1 and 2:

user-US-246150:pass

user-US-766402:pass

user-BR-623033:pass

user-BR-71104:pass

user-FR-412842:pass

user-FR-653389:pass

6. multiple cURL parallel + seq + xargs

In this example we will cover how to run multiple cURL parallel and pass parameters with xargs:

#!/bin/bash

for country in US BR FR;

do

for i in `seq 100`;

do

proxy_pass="user-${country}:pass";

#echo $proxy_pass;

seq 1 30 | xargs -I{} -P15 -- curl -x http://proxy.example.com:7878 --proxy-user $proxy_pass -L "https://example.com/" | jq -c . >> data_$country.csv & sleep 3

done

done

for country in US BR FR;- we use array as inputfor i inseq 100;- 100 times to repeat the parallel executionproxy_pass="user-${country}:pass";- proxy settings if anyseq 1 30- 30 parallel cURL requestsxargs -I{} -P15- start 15 at once| jq -c .- convert JSON to JSON linesdata_$country.csv- write in parallel to file

Note: If you need to keep track on the parallel count inside the parallel executions with xargs you can do:

seq 1 4 | xargs -I{} -P2 -- echo {}

This will produce:

1

2

3

4

summary

In this article we discussed how to run multiple cURL commands in parallel. We saw examples related to sequential runs, parallel runs with parameters, proxy setup and writing to file.

We saw how to chain cURL commands and tried to answer on questions like:

- How do I run multiple curl requests in parallel?

- How can I run multiple curl requests processed sequentially?

- How do you automate curl commands?

- How do you pass multiple headers in curl?

Other topics which were discussed briefly:

- run multiple curl commands in parallel

- curl --parallel --parallel-immediate

- curl multiple post requests

- curl repeat every second

- curl parallel download

- curl --parallel --parallel-max